Blog

How to reduce data compute costs with smarter querying

Published on March 25, 2026

Eugene Yukin

Why did that audience cost 4x more this week?

It happens all the time. Same audience brief. Same campaign. But the warehouse bill is suddenly four times higher.

Why? Because someone ran SELECT *, skipped pruning filters, or rebuilt the entire audience list from scratch instead of sending only the changes. For marketers, this means paying for noise. Scheduled ETL syncs often transfer data that’s already stale, like a nightly dump into a marketing platform that guarantees the customer data driving your campaigns is at least six hours old by the time it lands.

The fix isn’t complicated: query only what you need, only when it changes. Stop paying for full rebuilds when a delta would do the same job at a fraction of the cost.

Explore how MessageGears’ warehouse-native model makes this possible.

The cost drivers

Warehouse compute costs aren’t magic. They follow a predictable pattern, and once you know the pattern, you can break it. Here are the main culprits:

Bytes scanned. Failing to prune partitions, clusters, or columns. Every unnecessary column in a SELECT * is money you’re lighting on fire.

Join shape. Fan-outs, cross joins, and unbounded windows that multiply rows exponentially. A poorly shaped join can turn a 10-second query into a 10-minute one.

Rebuilds vs. increments. Refreshing full audiences from scratch when you only need the diffs. If 99% of the audience didn’t change since yesterday, rebuilding the whole thing is paying 100x for a 1% update.

Duplicate work. The same logic rebuilt independently in BI, CDP, and ESP tools. Three teams writing three versions of “active customers in the last 90 days” is three times the compute for the same answer.

Exports. Sending full files to third-party tools instead of deltas. A nightly full-file export to an ad platform when only 2% of records changed is 50x more data movement than necessary.

Each one compounds cost, slows performance, and creates brittle workflows that break at the worst possible time.

Smart querying for audience segmentation

Here’s how to cut warehouse spend without sacrificing speed or accuracy.

- Design “feature views” for marketing. Centralize traits like LTV bands, affinities, and eligibility flags into concise, columnar views. Only expose what marketing needs. This eliminates SELECT * at the source and ensures every downstream query is lean by default.

- Filter first, join second. Apply partition or date filters upfront, then join to the narrowed sets (orders, sessions). For membership checks, use semi/anti joins instead of heavy left joins. The order of operations matters more than most people realize. Filtering a 500M-row table down to 2M before joining it to another table is orders of magnitude cheaper than joining first and filtering after.

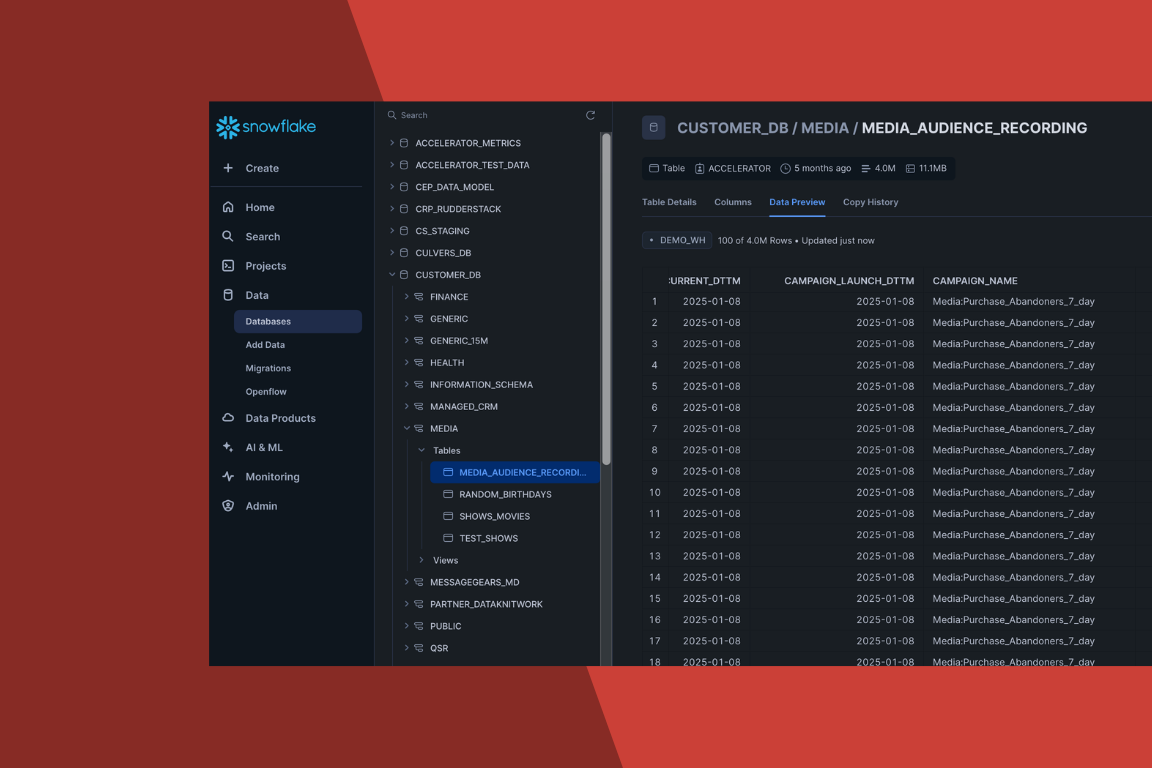

- Replace full rebuilds with delta audiences. Maintain an audience_membership table with is_member, valid_from, and valid_to columns. A nightly job updates only what changed (adds and removes) and exports just those diffs. For a 20M-profile audience where 200K records change daily, this cuts compute by 99%.

- Use windows wisely. Skip unbounded windows that chew through massive tables. Partition by time and pre-aggregate where possible. A window function scanning 2 years of clickstream data when you only need the last 30 days is the kind of silent cost killer that shows up on your invoice but never in your campaign results.

- Approximate where exact isn’t needed. Use approx_count_distinct for sizing or sampling for QA. Save the exact runs for final activation. When you’re deciding whether an audience is roughly 2M or 2.1M, you don’t need to scan every row to find out.

Discover how enterprises are implementing these principles in our case studies.

Table design that saves money

Your schema is as important as your SQL. A few smart design choices can slash costs before anyone writes a single query.

Partition on event time, cluster on common filters. Partitioning by date means time-bounded queries only scan the relevant slices. Clustering on customer_id or status further narrows what the engine touches. Together, they can reduce bytes scanned by 80–90% on typical marketing queries.

Keep “skinny” audience tables. Store keys and flags only in your audience tables; keep heavy attributes (full profiles, transaction histories) in separate tables that get joined only when needed. Most audience builds don’t need 200 columns. They need 10.

Use materialized views for expensive traits. If you’re recomputing LTV scores or purchase affinities in every query, materialize them once and refresh on a schedule. The compute cost of one daily refresh is a fraction of recomputing on every campaign build.

Run engine-specific maintenance regularly. VACUUM, OPTIMIZE, ANALYZE. These commands keep table statistics fresh so the query planner makes smarter decisions. Stale stats lead to bad execution plans, which lead to unnecessary full-table scans.

For more details, see Snowflake’s documentation and Google BigQuery’s best practices.

Query patterns: do’s and don’ts

Do:

- Column pruning: select named fields, not *. Every unnecessary column is bytes scanned you’re paying for.

- Predicate pushdown: WHERE event_date >= CURRENT_DATE – 30 before joins. Let the engine skip partitions early.

- Use CTEs or materialized temps to reuse subsets. If you’re referencing the same filtered dataset three times in one query, compute it once.

- Stick to distinct keys in joins. Joining on non-unique keys creates fan-outs that multiply rows and cost.

Don’t:

- Cross join to “shape” data. There’s almost always a better way, and the cost difference is exponential.

- Join raw clickstream to the full customer master without pre-filtering. This is the single most expensive mistake we see in marketing analytics queries.

- Recompute features in every query. That’s what feature views are for.

- Export full files to partners daily “just in case.” If the partner supports incremental loads, use them. Most do.

Owned vs. third-party channels: where the savings hit

Owned channels (email, SMS, mobile, web). Query in place at send-time. No duplicate data copies, no intermediate staging tables. The warehouse is the activation layer. This is where warehouse-native architecture delivers the most dramatic cost reduction because you’re eliminating the entire “copy data into the ESP” step.

Third-party (ads, walled gardens). Push only deltas via warehouse-native reverse ETL. Standardize ID maps (ad IDs, hashed emails) once to avoid repeated heavy joins against raw identity tables.

The principle is the same in both cases: move less data, scan fewer bytes, and never rebuild what you can update incrementally.

See how this approach works in MessageGears.

Governance = cost control

Smart governance keeps spending in check without slowing teams down.

Resource monitors and query budgets. Set spend limits by workspace or campaign so a runaway query doesn’t blow through your monthly budget in an afternoon.

Query tagging. Tag every query with its campaign, team, and use case so you can attribute cost precisely. When someone asks “why did warehouse spend spike this month?”, you can answer in minutes instead of days.

Publish “blessed” SQL and block anti-patterns. Maintain a repository of approved query templates and feature views. When teams use the blessed versions, costs stay predictable. When they freelance, costs don’t.

Observability. Freshness tests, row-count drift alerts, and bytes-scanned dashboards. You should know what every campaign costs to compute, and whether that cost is trending up or down.

Strong governance is a key reason enterprises trust MessageGears for secure, cost-efficient activation.

Additional cost-saving tips to reduce data compute

Beyond query and schema-level optimizations, there are also some infrastructure-level cost levers that can deliver equal or greater savings with minimal effort. Some additional approaches to consider include:

- Query result caching: Most modern data warehouses cache results for repeated identical queries at zero additional compute cost. For marketing teams that re-run the same audience counts or dashboards throughout the day, leveraging cached segments can lead to significant compute savings.

- Warehouse auto-suspend and right-sizing: For marketing teams that only need to run campaigns during business hours, suspending compute overnight and on weekends is free money. You can easily configure auto-suspend timeouts on your warehouse and/or pause data clusters during known off-hours.

- Cold storage tiering: Long-term data storage can often become ~50% cheaper after 90 days of no edits. For historical campaign data that’s queried infrequently, this can be a meaningful and easy win.

- Multi-cluster warehouse scaling: By configuring minimum/maximum cluster counts, you can prevent over-provisioning during quiet periods while still handling burst workloads during campaign launches.

30-60-90 day plan to cut compute

Days 0–30: Find and fix the spikes

Tag the top 10 queries by bytes scanned. Add partition and date predicates, remove SELECT *, and materialize the heaviest intermediate steps. This alone will typically cut 20–30% of waste because the worst offenders are usually a small number of queries running on autopilot.

Days 31–60: Design for reuse

Create feature views and a canonical audience membership table. Convert 2 full-rebuild exports into delta syncs. Add caching and materialized views for weekly cohorts. The goal here is to stop paying for the same computation happening independently in multiple tools.

Days 61–90: Operationalize savings

Ship query budgets and tagging. Publish a “cost-smart SQL” checklist for all teams that touch the warehouse. Migrate one owned channel to read-in-place activation and one ad partner to delta exports. Review KPIs and deprecate redundant pipelines.

KPI stack

Track savings with a clear set of metrics:

- Compute: Bytes scanned per audience, total credits consumed, cache hit rate.

- Speed: Audience build time (p95), trigger latency.

- Ops: Failed jobs, re-runs, reconciliation hours.

- Spend: Export size (GB/day), third-party processing fees avoided with deltas.

Objections and honest answers

“We need exact counts, not approximations.”

Use approximate functions for sizing and QA. Run exact counts once for final activation. You get 95% of the accuracy at 5% of the cost for everything except the final send list.

“Our partner tool requires a full file.”

Most partners support weekly baseline plus daily deltas. Ask them. And if they truly don’t, that’s worth factoring into your vendor evaluation, because full-file requirements in 2026 are a red flag.

“Marketing can’t learn new SQL.”

They don’t have to. Feature views and pre-built templates hide the complexity. Marketers interact with named segments and audience builders, not raw SQL. The data team writes the queries once; marketing reuses them forever.

FAQs

What’s the fastest way to lower warehouse costs?

Add partition filters and column pruning to your top-spend queries, then switch full audience rebuilds to delta exports. These two changes alone typically deliver 30–50% savings.

Should we partition or cluster first?

Partition by time for pruning (this has the biggest impact on bytes scanned). Cluster by the most common filter key (customer_id, status) for further optimization within partitions.

Can we cut costs without losing performance?

Yes. Pushing filters early and using materialized views usually speeds things up at the same time. Lower cost and faster execution aren’t trade-offs; they’re the same optimization.

How do we make sure teams follow best practices?

Ship blessed feature views, saved query templates, query tagging, and spend budgets. Make the right way the easy way, and the expensive way harder to do by accident.

Faster queries, lower bills, same results

Smart querying means less data movement, fewer scans, and the same (or better) performance. The enterprises getting this right aren’t doing anything exotic. They’re applying basic engineering discipline to marketing workloads: filter early, join smart, rebuild only what changed, and export only what’s needed.

The warehouse is already the most powerful tool in your stack. Stop overpaying to use it.